With AccessAlly you can upload MP3s, PDFs, and other file types and password-protect them through tags, just like any other page on your membership site.

ARTICLE CONTENT:

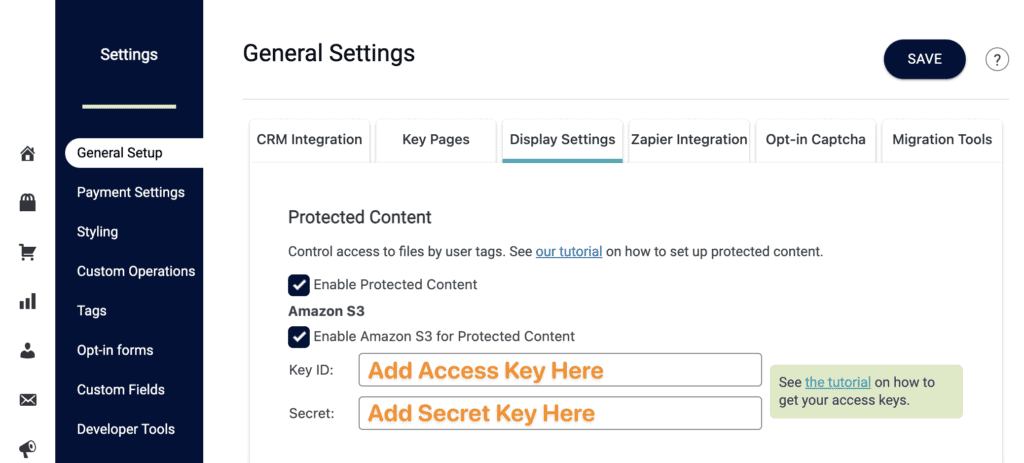

Review Protected Content Settings

Location: AccessAlly → Settings → Display Settings

There are two options for using this feature:

- Files under 10 MB confirm the box is checked by Enable Protected Content

- For larger file sizes you can Enable Amazon S3. Need to create Amazon keys? See this article.

Amazon S3 Users

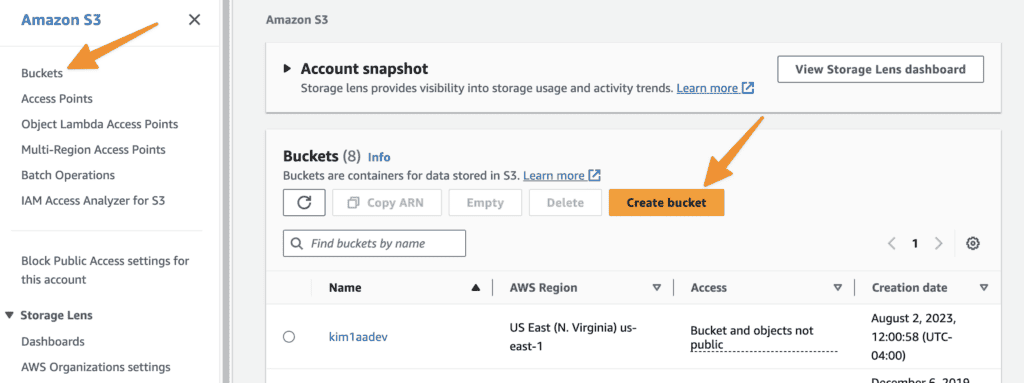

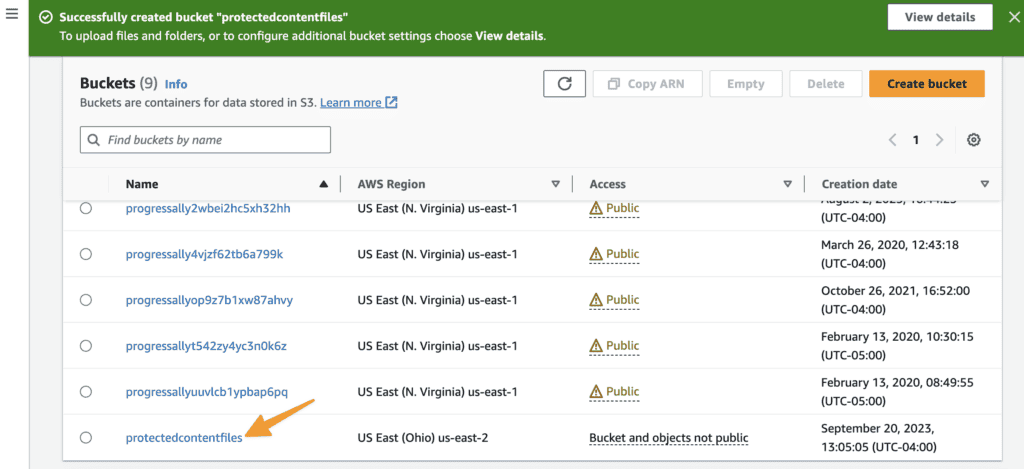

Creating Buckets for Amazon S3

We recommend creating “buckets” if using Amazon S3 to store your files. This makes finding and organizing easier. You can create as many buckets as you like.

Visit this link https://console.aws.amazon.com/s3/ → click “Buckets” → Create a Bucket

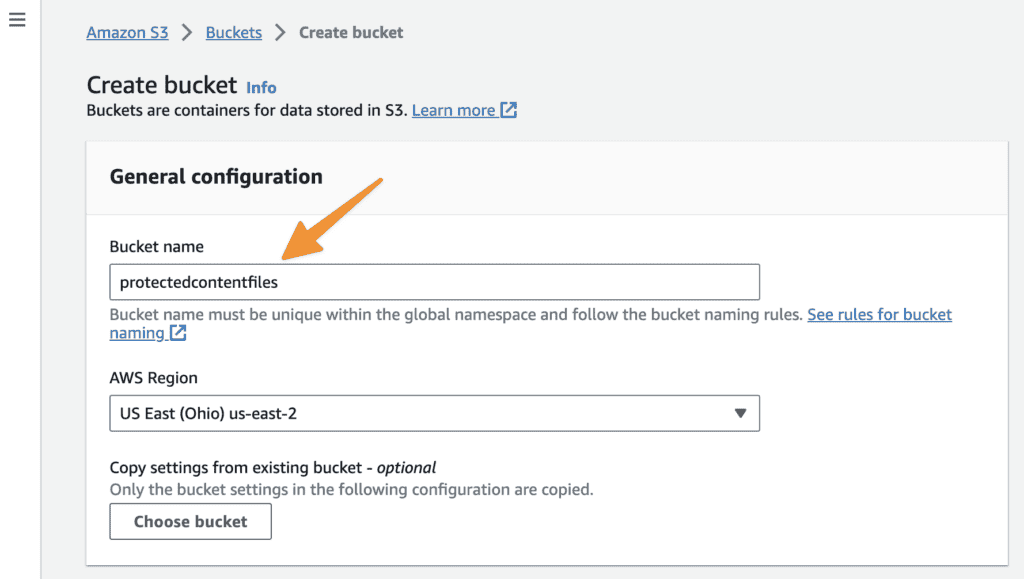

Name your bucket.

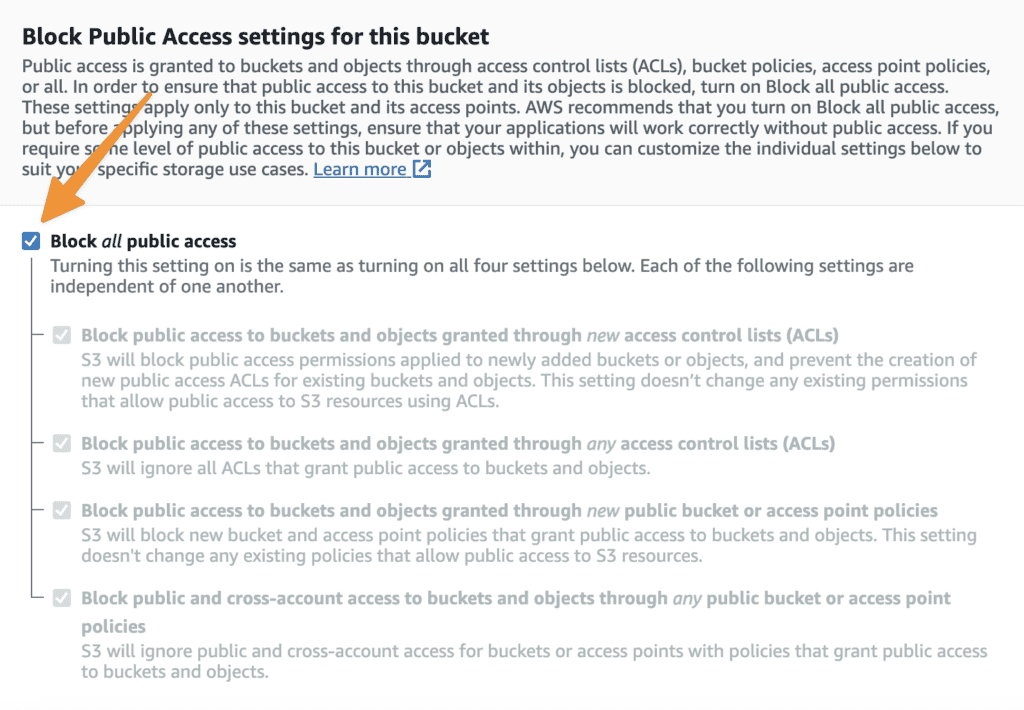

Confirm “block all public access” is marked to protect files you upload from unauthorized visitors.

Click your newly created bucket.

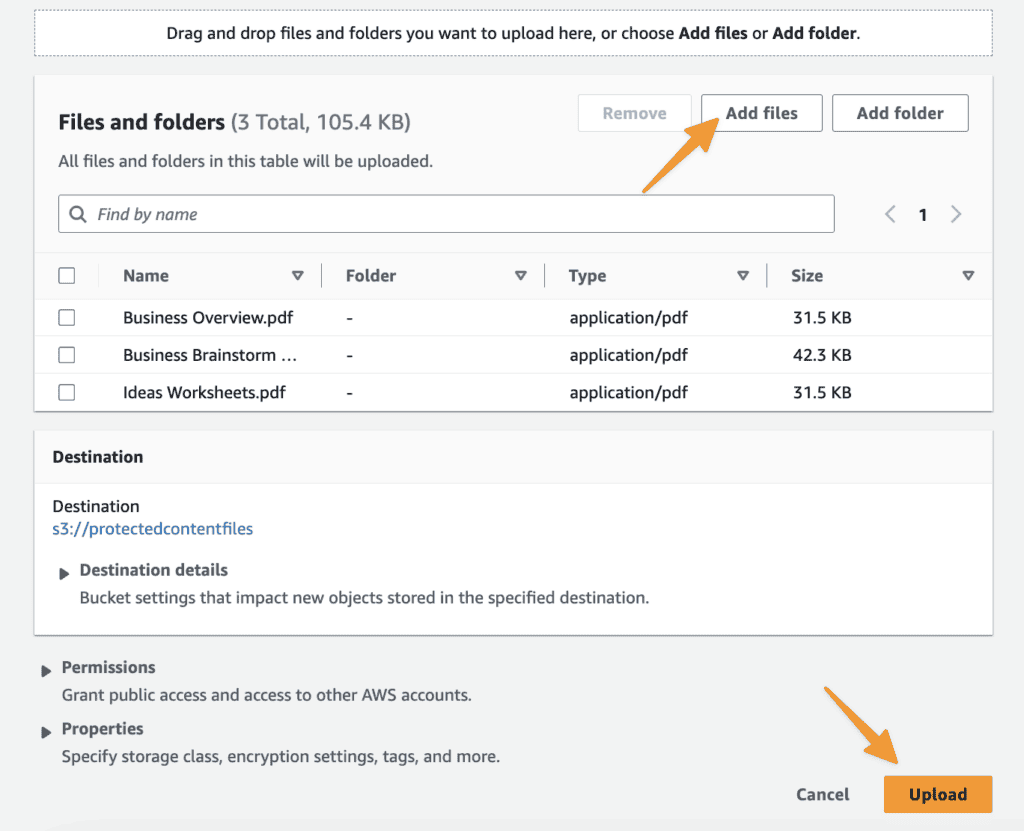

Start adding your files. You can do this one by one or add multiples at the same time. Click Upload once all files appear in the list.

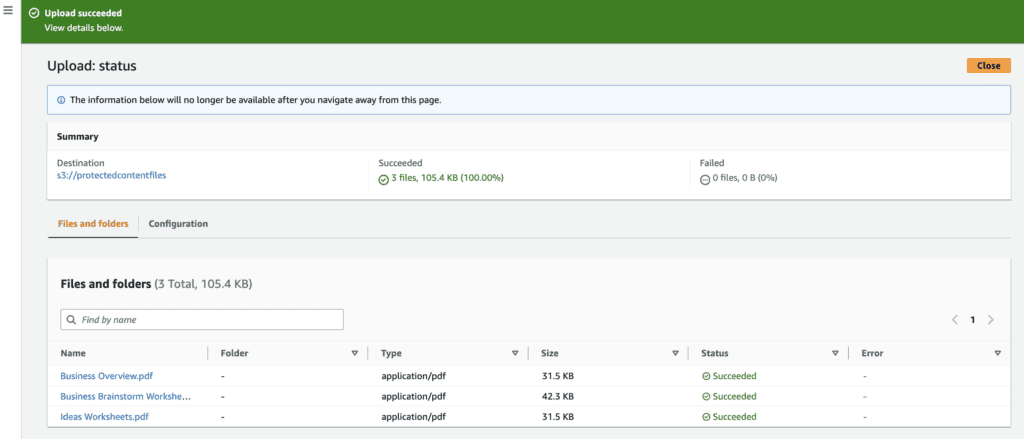

Confirm successful file upload and close this window.

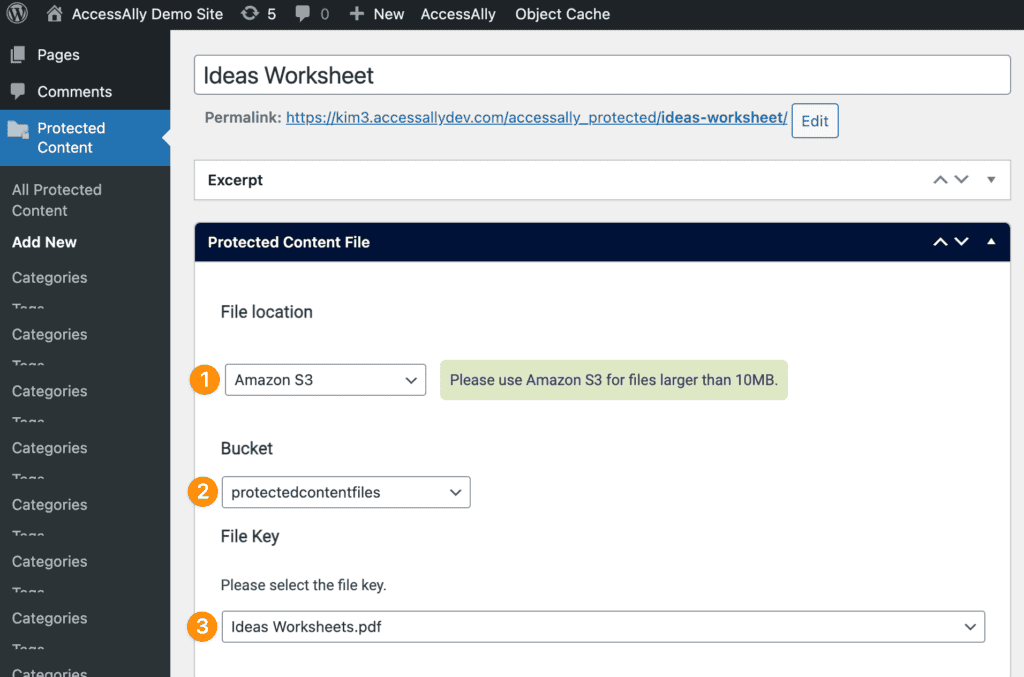

Confirm Amazon S3 Integration

Go to Protected Content → Add New

Amazon S3 is integrated if you can select Amazon S3, your bucket, and your files.

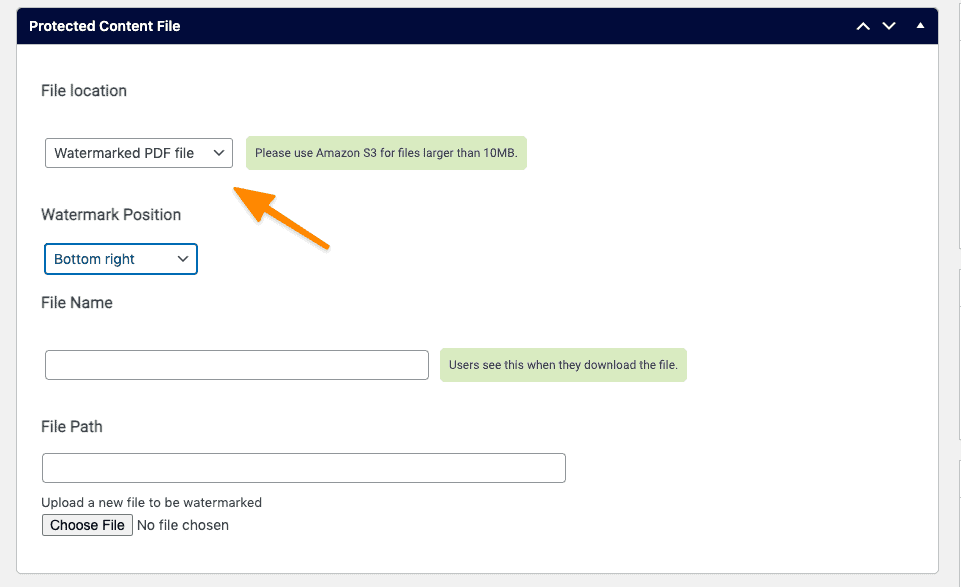

Watermark Protected Content PDFs

*This feature is not compatible with Amazon S3 files.

Select Watermarked PDF file and add add your desired watermark position.

3. Upload your PDF and save your Protected Content item.

4. Test with the User Switching plugin or a test user to confirm the watermark.

Add Protected Content Files

There are two ways you can add protected content files to your site.

- Add in Protected Content Feature (great for single file downloads where you do not have additional content to add to the file)

- Add Protected Content to an Offering (great if there is a course or additional content that goes with the file)

Option 1: Add in Protected Content Feature (No Offering Required)

*Try this option if you do not need a page or post in an offering to deliver a standalone file like an e-book or audio download, no offering is required with this option.

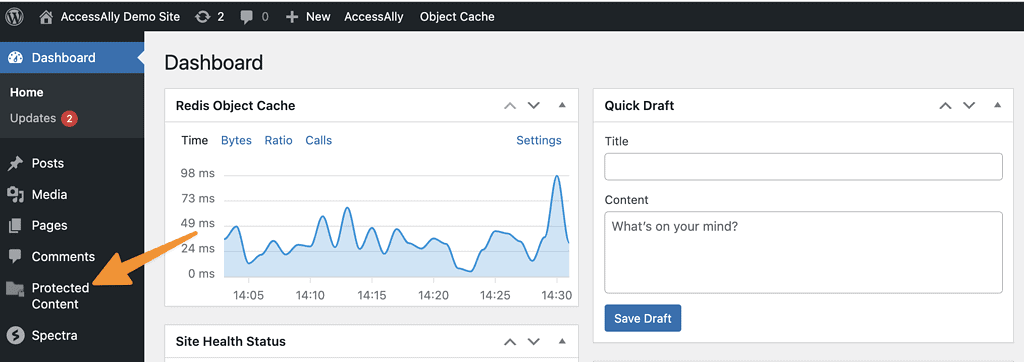

For the most flexibility you can add protected content from the WordPress Dashboard. This option is great if this piece of protected content is used by multiple different offerings in your site because you can tag each for access to this content.

Here you can add new files, edit existing ones, or sees the categories and tags in use for your files. If you’d like to add a new piece of protected content select:

Add New

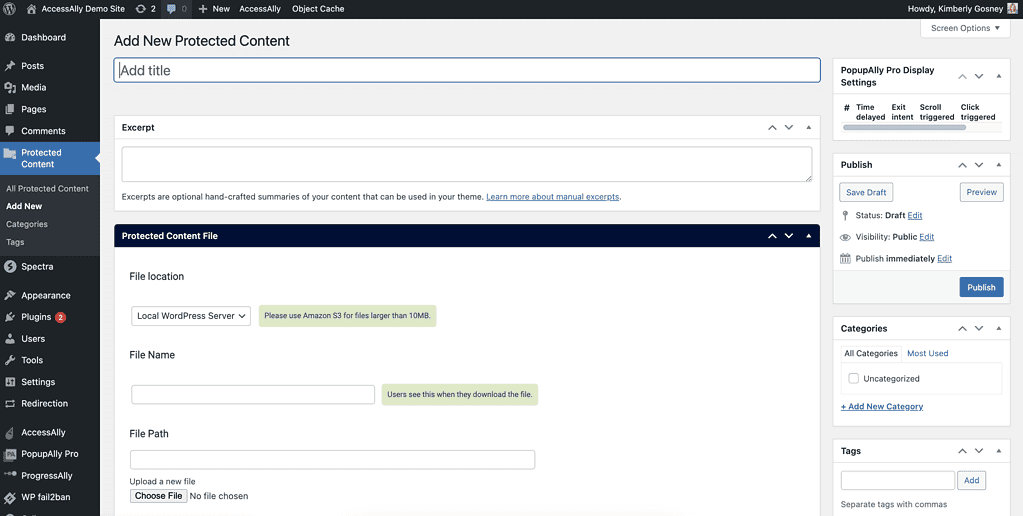

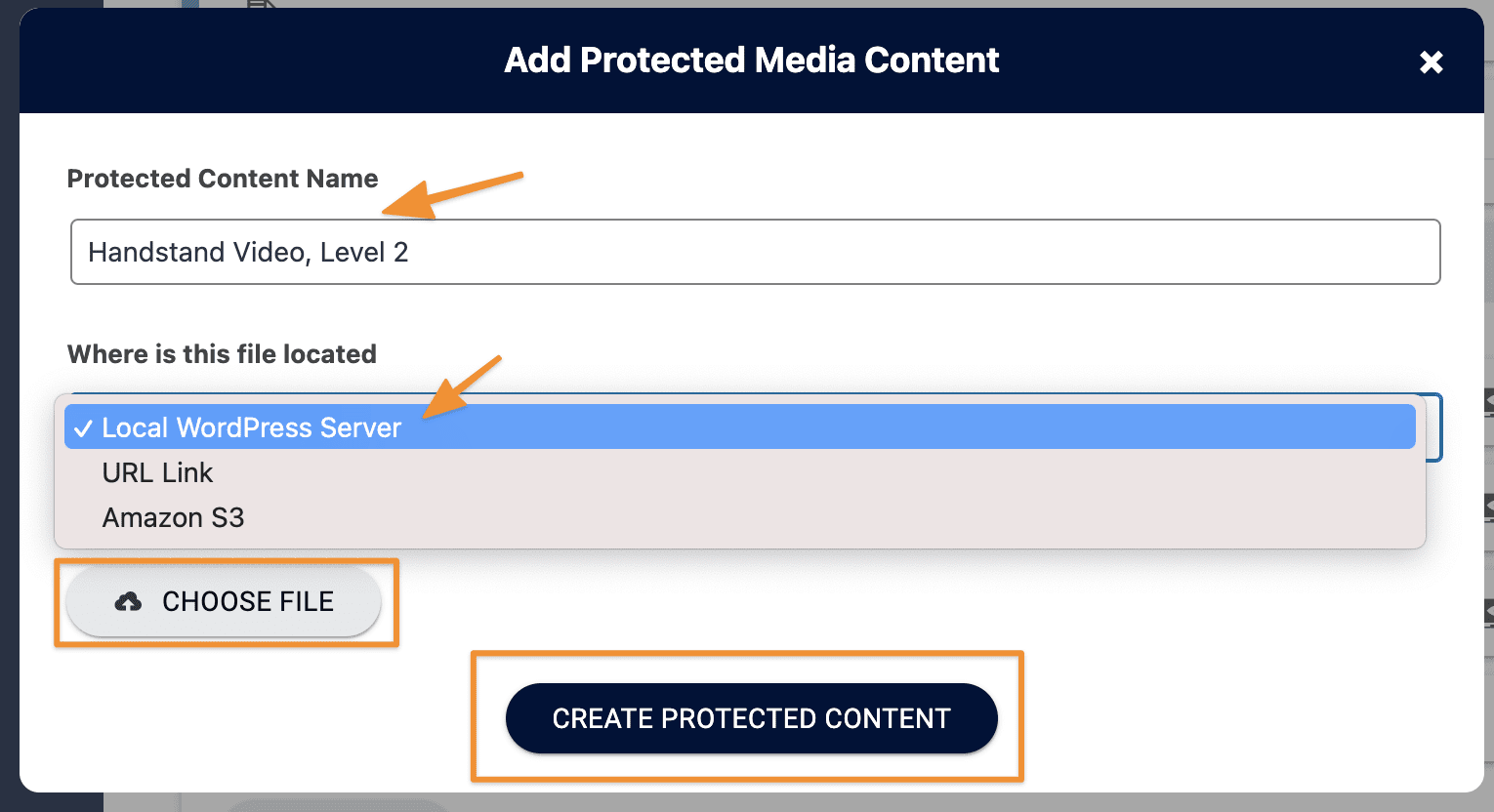

- Name your file

- File location is local unless integrated with Amazon S3

- Adjust file name if desired

- Upload your file (or select file from Amazon S3 bucket)

You can add WordPress categories and tags on the right (note these are not AccessAlly page access tags!)

Protected Content Permissions

This feature works in a similar way to offering access where items or shown or hidden based on the AccessAlly tags a client has. If you would like to add images or create conditions for when your protected content can be seen you can set these up here.

Option 2: Add Protected Content to an Offering

Adding your protected content in an AccessAlly offering applies all page tags and redirects at the time of setup. This option is great if this piece of protected content belongs to a specific offering.

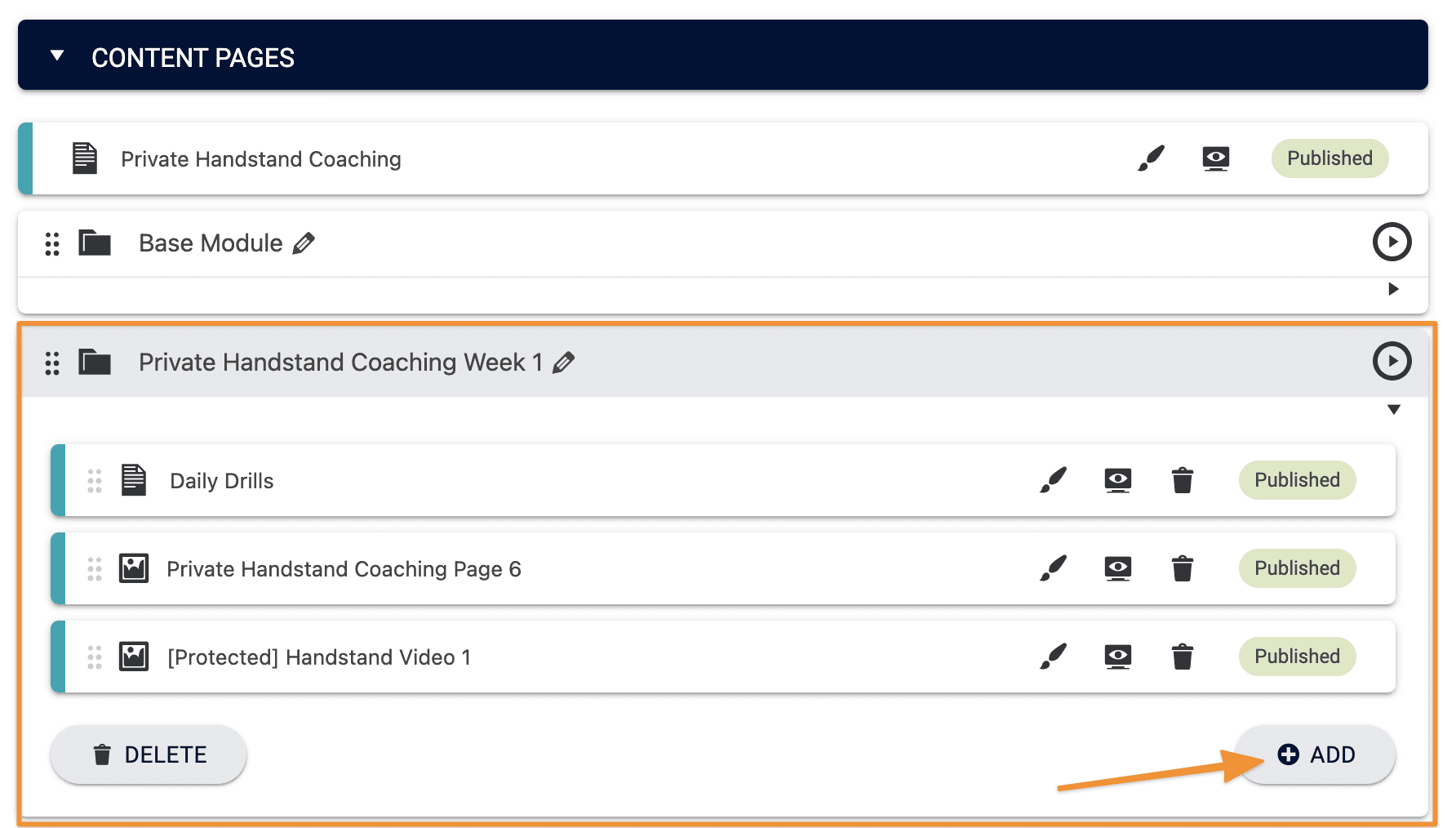

Go to: AccessAlly → Offerings → Select the offering. In the content tag click the “+Add” button in the module you’d like to add a protected content file to.

Name your protected content, specify where the file is located, and choose the file. If your file is located on Amazon S3, that’s covered later in this article.

Your content will be added as a draft. Click Save to publish your new protected content.

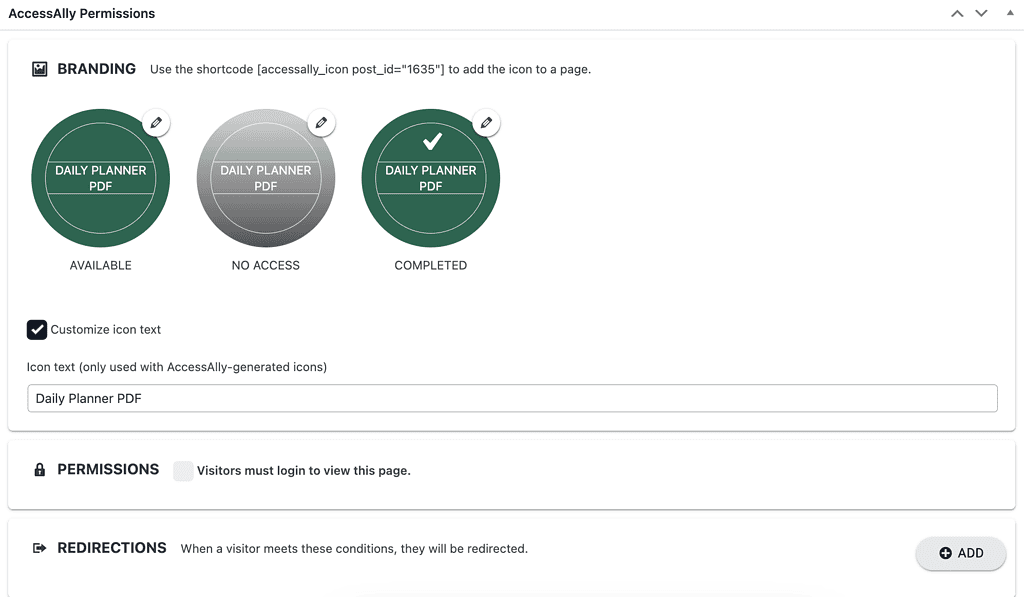

Editing Protected Content in an Offering

When you add protected content through the Offerings wizard, permission tags and redirects are automatically applied. You can edit those permissions, redirect, and/or icons by clicking on the pencil icon after the protected content is created and saved.

Upon clicking the brush icon in the offering you will be taken to the Protected Content feature with this item open in AccessAlly.

![]()

Testing Protected Content

Test your protected content with the User Switching plugin or a test user. A successful test reveals the protected content file upon clicking the link.

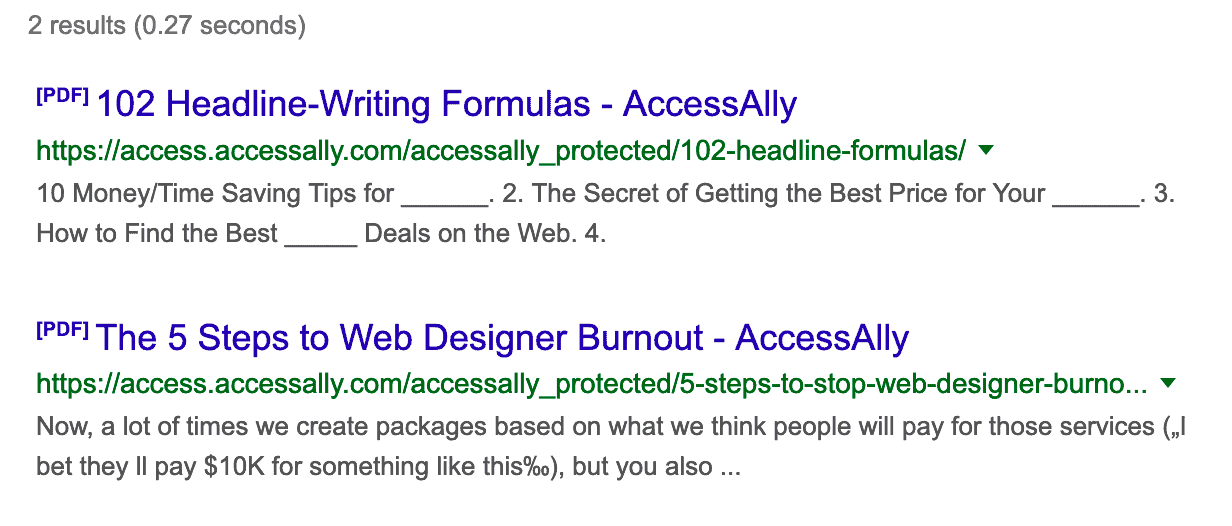

Remove Protected Content From Google Search Results

If you’re using AccessAlly’s protected content, you might do a search in Google or another search engine and find some of your files are indexed and showing up in the results.

The good news is that no one can access these files unless they’re logged in and have the correct tags.

You can remove protected content from Google search results by following the instructions in this tutorial.

But it might not be the best user experience to have people click through to a protected file, so you might want to remove them from the search engines entirely.

Here’s how to do that!

Blocking Files In Robots.txt

The best way to block the search engines from indexing and linking to files you’d rather keep private is to edit your site’s “robot.txt” file.

Every website has this file at the root directory, and it tells the search engines what’s fair game and what’s off limits.

This is the text you’ll want to add to this file to stop the search engines from indexing your protected content files:

User-agent: *

Disallow: /accessally_protected/*

Two Main Ways of Editing Robots.txt

If you’ve never edited your robots.txt file before, there are a few different ways of doing it. Here they are, and the resources walking you through the steps:

Article FAQs

A: Visit this article to see all the different ways you can display your protected content files.

A: Try flattening your PDF files before uploading to Protected Content. Flattening converts layers, transparencies, and fonts into a single image or vector object. In the case of fonts, it essentially “bakes” the font into the design so it’s no longer editable text but a visual element.

This prevents issues like font substitution and color issues because your PDF will be treated like a series of images in the browser.

Yes, you can! See Option 1 in this article to “Add in Protected Content Feature (No Offering Required)”

Videos are added to pages in offerings using the media objective in ProgressAlly or added using embed code. Videos are not compatible with the protected content feature.

A: Yes you can!

A: Yes, you can! Add your shared templates in Canva Pro plans using the URL link option and linking out to the template file.